This is one of the funnier problems I had in the last while: One day I noticed that my editor was really slow when moving the cursor vertically:

(right: expected behavior, left: actual behavior on my machine)

A quick search on the internet seemed to suggest that this was due to syntax highlighting and recommended turning off cursorline to alleviate the problem. As I am using a semi-stable rolling-release Linux distribution and did have problems involving true-color syntax-highlighting before, I disabled cursorline (which did indeed help in reducing the slowness), dismissed the problem as an issue with vim, my terminal emulator or whatever and went back to what I was doing before, hoping the issue would go away with the next update.

However, this kept bugging me because a) I really like cursorline and b) my machine at work wasn’t having these problems, even though it had (in theory) exactly the same software/hardware-configuration as my home-box (I had recently upgraded the latter to match and was generally very happy with the newfound performance). So one day I started looking into this a little deeper: As a plain nvim (nvim -u NORC) was running fine, I started bisecting my config. While I didn’t put much hope into that, as I expected the issue to be caused by my syntax-highlighting package or color-scheme, I ended up with a pretty minimal reproducer config for the problem:

:highlight ExtraWhitespace ctermbg=red guibg=red

:match ExtraWhitespace /\s\+\%#\@<!$/

set cursorline

As the cursorline only exercabates the problem, the real culprit seems to be the extra rule for highlighting trailing whitespace that I copied off the internet. Still, my work machine was performing fine with that config. After fiddling around with build options and library versions for a while to divine what made the machines behave differently, I did what I should have done much earlier and git-bisected the problem, ending up at this commit. Reverting it sure enough fixed my problem. Huh?

A bit more fiddling revealed that the calls to profile_passed_limit were causing the slowdown and the “bad” highlighting rule above was causing them to happen much more frequently (on the order of 1000x) on both machines, but the calls were taking much longer on my home-box for some reason.

profile_passed_limit calls os_hrtime, which is provided by libuv and in its Linux-implementation calls clock_gettime(CLOCK_MONOTONIC, ...) to get the current timestamp with a reasonably high accuracy. Now, clock_gettime is strictly speaking a syscall, but as it is called frequently enough to be a bottleneck, Linux contains a mechanism to handle it in userspace, without doing a context switch, which makes it much faster. This mechanism, called vsyscall/vDSO has been sufficiently covered elsewhere, so I’ll mostly defer to those explanations here. The core idea is that the kernel injects some data and a library for accessing it into each address space to implement some commonly used syscalls which don’t strictly need special privileges, such as clock_gettime, gettimeofday, time and getcpu (the set is different on each architecture) without invoking the kernel. A side-effect of this is that such calls are invisible to the ptrace syscall, and thus to the invaluable strace utility.

Sure enough, strace was showing clock_gettime calls on my desktop machine, but not on the work machine.

Let’s look at the code which ends up in the vDSO page for clock_gettime. We first try to get the requested value via a fast path and fall back to the syscall route if that is not possible for some reason. To debug this on my machine, I wrote a program which calls the code from the vDSO page directly to rule out any libc weirdness (I only later realized that the Linux kernel already supplies such a tool) and single-stepped it in a debugger. Sure enough, we fell back to the slow path. Why?

The x86 implementation for getting the CLOCK_MONOTONIC value gets the coarse time from the kernel data page (GTOD) and augments it using a precise clock accessible from user space, depending of the value of the vclock_mode field. Looking at it in the debugger revealed it to be 0, or VCLOCK_NONE, which triggered the fallback into the slow path. Why is it VCLOCK_NONE? vclock_mode is set in the function updating the GTOD page depending on the currently configured system clock source. We’d usually expect this clock source to be the TSC, but that should cause vclock_mode to be set to VCLOCK_TSC, which it isn’t. So what clock are we using?

$ cat /sys/devices/system/clocksource/clocksource0/available_clocksource

hpet acpi_pm

$ cat /sys/devices/system/clocksource/clocksource0/current_clocksource

hpet

Oops. Now everything makes sense, though: The HPET cannot be queried from userspace and requires the slow path through a syscall. But this is a modern system and it really should be able to use the TSC instead, why isn’t it?

$ dmesg

…

[34568.761895] TSC synchronization [CPU#0 -> CPU#1]:

[34568.761895] Measured 4155824952 cycles TSC warp between CPUs, turning off TSC clock.

[34568.761898] tsc: Marking TSC unstable due to check_tsc_sync_source failed

…

This seems to be a BIOS bug: After a reboot, the TSC is fine, but after a suspend/resume, the TSC is warped between cores, making it unusable and causing clock_gettime(CLOCK_MONOTONIC, ...) to take the slow path, which makes my editor scroll slowly. This was not where I expected to end up when I started debugging this issue. ;)

This also explains why this problem doesn’t show up on my work machine: There, I have a mainboard supplied by HP in their office series, while at home I got myself an ASRock Z170 Extreme4, which contains a different BIOS implementation. Some googling around revealed that other people were having similar problems on a slightly different board utilizing the same chipset. They received a patched BIOS by ASRock (after jumping through some hoops), which seems to have solved their problems. I was at first reluctant to deal with the support because I didn’t want to have to jump through the same hoops (Installing Windows, and whatnot), so I patched libuv to use CLOCK_MONOTONIC_COARSE which doesn’t require a working TSC on x86 (which is a pretty ugly way to avoid this problem and only helps applications which use libuv, but that was good enough for the moment).

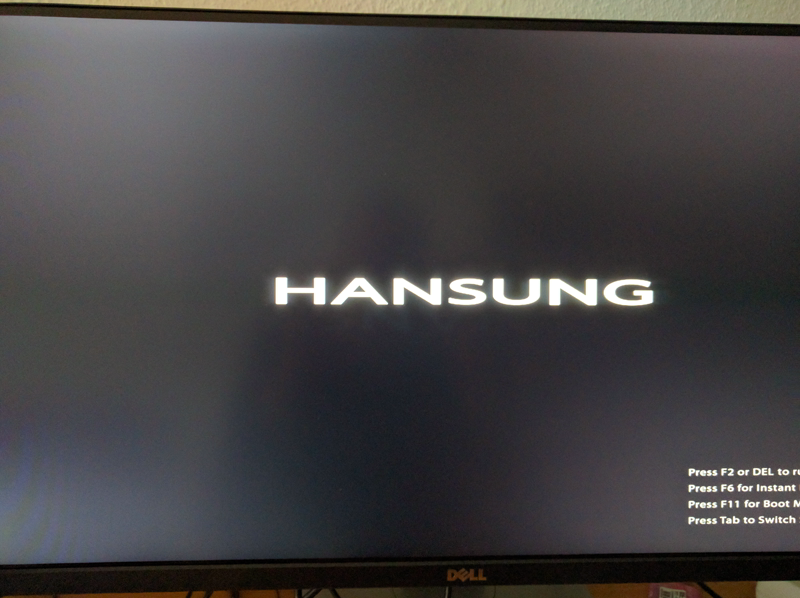

Last weekend though, I decided to give it a shot and contacted the support, referencing that forum thread and some of the above debugging details in the hopes of sparing me the first-level support. This seems to have worked, as I received a prompt email from the ASRock support, with a patched BIOS attached. However, it seems they accidentally sent me an Asian OEM version:

And the box didn’t boot any further afterwards. However, another pleasantly short email roundtrip later, I received another BIOS image, which did indeed solve the TSC problem and everything that resulted from it.

However, it seems they are not necessarily going to roll that up into the next official release:

> Will this fix be included in the next public BIOS release?

The bios 7.00a we provide is a special modified version of 7.00

These special changes might not me implemented in next (official) BIOS.

Anyway, we recommend users do not update BIOS if system is working well.

Anyway, if somebody runs into the same problem, here’s the version I received. Also, in case I or somebody else is bored and wants to diff the images, here’s the original version archived from their FTP.